Last updated: 08.10.2018

This article was originally published in French on the “Intelligence Mecanique” blog, a Scilog blog related to the magazine “Pour la Science”. Link to French Version

Target audience: all audiences, high school students;

Keywords: activation function, modeling, artificial neuron, biological neuron, science popularization

In order to reconstruct concepts related to the brain, to study them, and to simulate their functioning, researchers use artificial neural networks. Many types of networks exist today to represent different types of memories, so we talk about models.

For this new series of articles on neural networks, cognitive functions, and our mechanical intelligence, it is good to go back to the basics and remind ourselves of some fundamentals!

Biological Neuron vs Artificial Neuron

You have often heard and read it: the minimal unit of an artificial model of an artificial neural network is based on an artificial neuron sometimes also called also a formal neuron, and the latter is an artificial mathematical and computer representation of a biological neuron

A biological neuron receives inputs or signals transmitted by other neurons (dendrites-synapse interaction). At the level of the body (soma), the neuron analyzes and processes these signals by summing them. If the result obtained is higher than the threshold of activation (or excitability), it sends a discharge then called action potential along its axon towards other biological neurons.

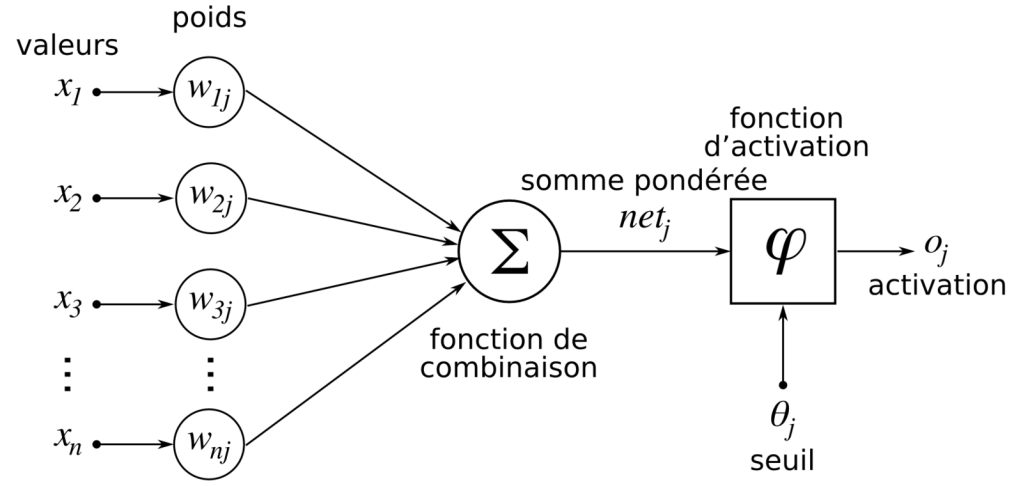

An artificial neuron is an artificial, schematic representation of a biological neuron:

- Synapses are modeled by weights,

- The soma or cell body is modeled by the transfer function, also called activation function

- The axon by the output element

Mathematical operation of the artificial neuron

Un neurone formel, au même titre qu’un neurone biologique, recoit plusieurs stimuli via les poids. Il analyse ces informations et fournit un résultat en suivant.

Let’s take a closer look:

- Each weight has a value noted

. Cette notation, la plus répandu dans la littérature scientifique, désigne le poids allant d’un neurone formel i au neurone formel j.

- Each weight transmits an information (i.e. a stimulus) coming from the source neuron i

.

- This stimulus (its value) corresponding to the information sent by the source neuron i is modulated by the weight linking neurons i and j. Mathematically this translates into :

- Thus neuron j receives as many stimuli as weights, of which it makes the sum

If we note n the number of source neurons linked to neuron j, a more complete mathematical notation would be :

This expression then reads as follows: “the sum of all multiplications of the values of the n source neurons by the weights associating these source neurons to the considered neuron j” (i taking the values: 0, 1, 2, …, n)

It is this sum that the formal neuron j must then process! It uses the activation function for this.

Activation function in an artificial neuron and outgoing information

In the field of signal processing, an activation function* is “a mathematical model of the relationship between the input x and the output y of a linear system, most often invariant “.

| An activation function can be translated in french as “une fonction d’activation” but also “une fonction de transfert” |

In the field of signal processing, an activation function is “a mathematical model of the relationship between the input x and the output y of a linear system, most often invariant “

In the field of neural networks, this function can also be called a combination function, a thresholding function or an activation function. Biologically, the idea of an activation function comes from the idea of mimicking the functioning of an action potential of a biological neuron: if all the input stimuli of a neuron reach its excitability threshold, then this neuron provides an output (it discharges).

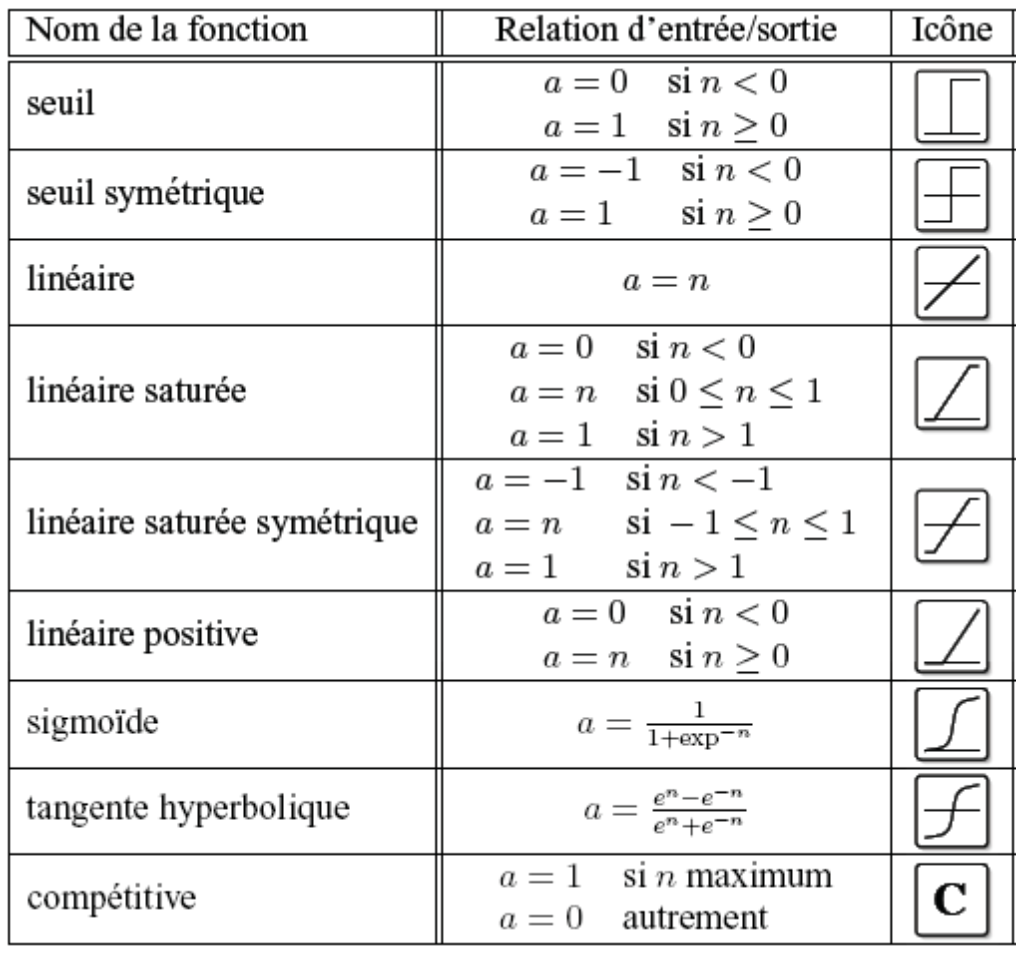

Several mathematical functions can be used :

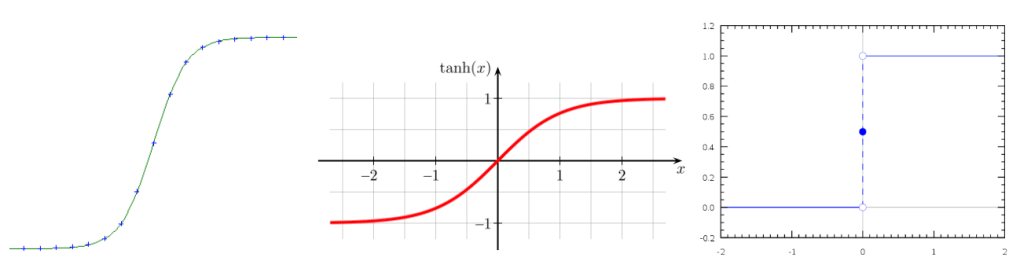

Here we will briefly discuss the Sigmoid function, the Hyperbolic Tangent and the Heaviside function.

- the Sigmoid function is often used because its derivative is simple to compute, it allows simplified computations when learning the neural network

- the Hyperbolic Tangent function is the symmetrical version of the Sigmoid function

- the Heaviside function allows to obtain binary outputs, 1 if the threshold has been reached, otherwise 0.

Other variants exist depending on the architecture and the tasks to be modeled. The choice of a transfer function rather than another is empirical: it is done either in an arbitrary way according to the task and the architecture (the structure) of the neural network, or by being inspired by previous works from the scientific literature.

To know more :

Computational neuroscience

Let’s highlight a very specific scientific field that allows us to study different models of biological neurons: it is computational neuroscience.

This field is entirely dedicated to understanding neuronal interactions and their involvement in brain function.

This is particularly a field that cannot be ignored when studying cognitive functions in humans (reasoning or decision making) or certain pathologies such as neurodegenerative diseases like Alzheimer’s and Parkinson’s.

What now??

Understanding the structure of a formal neuron, also called an artificial neuron, is the basis for understanding an artificial neural network.

It is by arranging two to several formal neurons together that we form a neural network or neural net. This is referred to as the architecture of the network.

Many architectures exist, and each is effective in handling a given problem (a given task): image recognition, classification, translation, etc.

To cite this article :

IIkram Chraibi Kaadoud & Thierry Vieville. Let’s go back to the basics: Artificial neuron, biological neuron. English version of the publication “Reprenons les bases : Neurone artificiel, Neurone biologique” from the blog “Mecanical Intelligence” http://www.scilogs.fr/intelligence-mecanique, 2018

References

Touzet, Claude. les réseaux de neurones artificiels, introduction au connexionnisme. EC2, 1992.