Last update: 25/10/2022

I am very happy to share with you my first paper in developmental robotics (to learn more about this field, click here) published in the journal Neural Networks with an impact factor of 9,657 and specialized in Artificial intelligence and Cognitive Neuroscience.

| I. Chraibi Kaadoud, A. Bennetot, B. Mawhin, V. Charisi & N. Díaz-Rodríguez (2022). “Explaining Aha! moments in artificial agents through IKE-XAI: Implicit Knowledge Extraction for eXplainable AI”. Neural Networks, 155, p.95-118. 10.1016/j.neunet.2022.08.002 |

First, I would like to thank the Editor in Chief, the reviewers and the journal manager, and their teams for their assistance and guidance. I would also like to thank the passionate team whit whom I collaborate on this paper and pushed me to go further in order to obtain better results: Adrien Bennettot, Barbara Mawhin, Vicky Charisi, and last but not least, Natalia Diaz-Rodriguez. Thanks, you brought me a lot both scientifically and humanly!

What is the article about? We have been working on the development of knowledge in autonomous agents inspired by the cognitive development of children!

We asked ourselves the following question: “from the observation of the behavior of an artificial agent, i.e. without access to its internal parameters, can we extract its knowledge of the world, the evolution of this knowledge and thus interpret its behavior, whether it is optimal (the agent found the best solution) or not”?

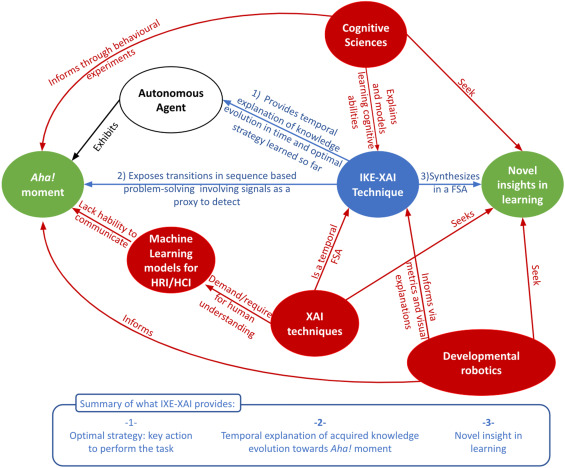

How did we tackle the subject? By positioning ourselves in the field of Explainable Reinforcement Learning for developmental robotics, we were inspired by approaches in cognitive modeling and works in Explainable AI and Interpretable Machine Learning. Fig. 1 explains the conceptual summary of our work.

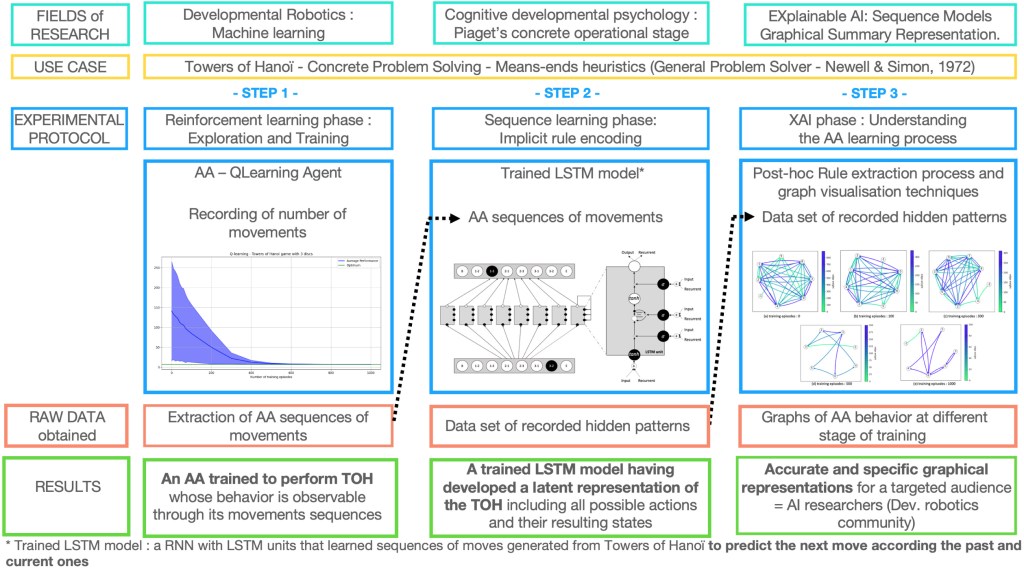

The context? We chose the Tower of Hanoi (TOH) task, which is widely used in cognitive psychology to observe the problem-solving abilities of children. The TOH task is widely used in reinforcement learning to test artificial agents.

Our main contribution? A 3-step methodology named Implicit Knowledge Extraction with eXplainable Artificial Intelligence (IKE-XAI) to extract the implicit knowledge, in the form of automata, encoded by an artificial agent during its learning process, with 1) a Q-learning agent that learns to perform the TOH task; 2) a trained recurrent neural network that encodes an implicit representation of the TOH task, and 3) an XAI process that uses a post-hoc implicit rule extraction algorithm to extract finite state automata. We propose to use graphical representations as visual and explicit explanations of the Q-learning agent’s behavior. Fig.2 presents the experimental design of IKE-XAI methodology in detail.

Our results? Our experiments show that the IKE-XAI approach helps to understand the Q-learning agent’s behavior development by providing a global explanation of its knowledge evolution during learning. IKE-XAI also allows researchers to identify the agent’s Aha! moment by determining at what point the knowledge representation stabilizes and the agent stops learning.

This work was an opportunity to ask questions about the emergence of the Aha! or Eureka! moment in humans and to question the subject of modeling it with AI, and thus start a journey in the field of artificial creativity.

Link to the article: https://doi.org/10.1016/j.neunet.2022.08.002

Link to the GitHub repo (read the readme first!): https://github.com/ichraibi/Extracting_Aha_moment_from_Qlearning_agent_through_IKE-XAI_method.ip